Discovery Outcomes – Electronic Questionnaire (eQ)

As fast as it began our 2-week Discovery is over. We’ve gained a great deal of insight into how ONS questionnaires are authored, reviewed and responded to. We have a backlog for the Alpha and have engaged with stakeholders across the organisation. I’ll provide some details of what happened since week 1 and the main outcomes of our Discovery that will feed into the Alpha which started this week.

Users

From the interviews, existing research and discussions during the Discovery we have identified five main users for our eQ platform, they are:

- Authors: Users who create, build, edit and maintain questionnaires which are used to gather data.

- Reviewers: Users who provide quality assurance and testing of questionnaires.

- Respondents: Users who complete questionnaires. They can respond for themselves or as a proxy for someone else or another entity (e.g. a business).

- Interviewers: Users who interview respondents to complete a questionnaire.

- Admins: Internal Users who will administer and support the live platform, providing a customer service and front line support.

The one group of users that caused the most discussion within the team was Respondents, to explain why I think it’s worth explaining briefly what is involved in producing a questionnaire.

The ONS has teams (our Authors) who work on each of the various surveys we run, these teams have a lot of domain knowledge about the industry and topic their survey is focused on. The survey teams have a good understanding of which questions need to be asked to gather the data needed for the various statistics the office produces, so they will create a draft for a questionnaire containing a series of questions. Now asking a question is more complicated than you may think. You have to be careful not to influence the answer in the way the question is asked, the question needs to be comprehended the same way by all respondents and the questionnaire needs to be usable so the answers can be entered.

In order to produce a final questionnaire, the questions, their format, layout, etc. will go through a series of tests, reviews and quality assurance processes (undertaken by our Reviewer users). This often involves undertaking interviews with real respondents to test their understanding of the questions, interpretations, etc.

During the Discovery we really thought about this process and what it meant for the eQ platform. Would this require us to interview and run tests with every kind of respondent the ONS interacts with (that would be a huge amount of work!)? Fortunately we came to a natural and logical conclusion; we aren’t setting out to replace the work the authors and reviewers undertake, but to provide them the tools they need to do their job.

Practically this means we will set out to ensure that questionnaires produced with the eQ will be highly usable by any respondent, but it will be up to the authors and reviewers to ensure that the content, format and layout are right for the specific respondents being surveyed (e.g. comprehendible, unbiased, etc.)

So what does this mean? It means that we can focus our attention on the general usability of an electronic questionnaire for any respondent and support our authors and reviewers in producing questionnaires that meet their needs and those of their specific respondents.

Phew! Now that authors, reviewers and respondents are understood, I want to quickly clarify our interviewer and admin users. Interviewers are individuals working for the ONS who visit households to conduct interviews to complete questionnaires, they may have more power than a regular respondent, for example being able to skip or jump to specific questions. Admin users are those who support the running platform, one example is resetting forgotten passwords for other users.

In order to focus on the high priority areas for the 12-week Alpha we have decided that we will consider the needs of interviewer and admin users during the Alpha but specifically developing and designing for them will be out of scope, at least until Beta. This means we are going to focus on the Authors, Reviewers and Respondents as our primary users.

Story Map

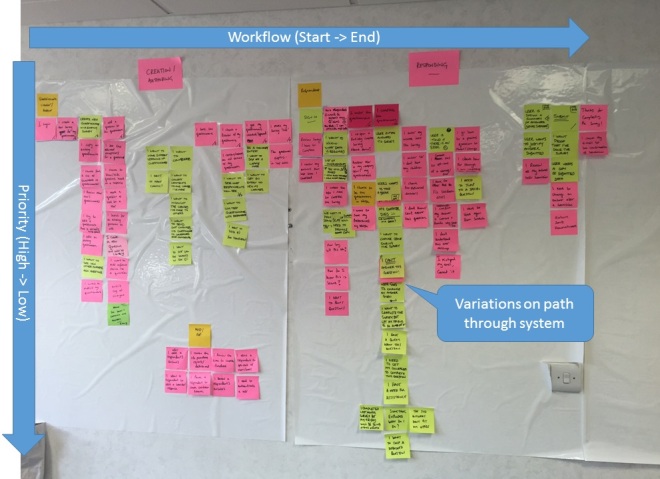

Towards the end of Discovery we ran a Story Mapping session with the whole team to build the initial backlog for the eQ. If you aren’t familiar with the concept of using Stories for development Martin Fowler provides a quick intro. I’ve previously worked with flat-backlogs in agile teams, but having been exposed to the concept of story maps and seen first-hand how such a simple concept can become a powerful visualisation technique, I can safely say I’ve been converted.

Story mapping was an excellent way for the whole team to understand the workflow of our different users as they progress through the system. It also gave everyone the opportunity to contribute ideas, questions, concerns etc. During this session a lot of different items went up on the map, which does mean it is quite ‘raw’ at this stage but has already been useful to explain the system to our users, customers, sponsors etc.

You can read the map by scanning left-to-right across it, this is the workflow through the system, for us this goes from someone in ONS logging-in, creating a questionnaire, adding questions, getting them reviewed, saving and publishing them. It continues with the respondent accessing that questionnaire, completing it and submitting their responses. Going from top-to-bottom shows variations in the workflow through the system, for example an author copying an existing questionnaire rather than creating a new one. The position vertically is used to indicate the priority, with items at the top being essential and the very bottom ones most likely falling into the nice-to-have category. The work within project will be driven by this map, initially by focusing on the very top row and then working our way down the columns where we see the most value being added.

The team will continue to update the map, shifting items up/down to re-prioritise and adding/removing as our understanding of our user needs evolves, it is a living document.

Noting that ONS has sites in London and Titchfield in addition to Newport where we are based, one issue we have today with the story map is how best to share it with a wider audience, since it is physically located in the team room and constantly changing. One option is to use an electronic system like Trello, although this does require keeping the two synchronised, which my past experience tells me can become quite a burden. It may be more practical to provide a snapshot of the map at various points in time instead or summarise into a higher level view. I will post in future about how we solve this issue, but ideas are welcome.

Two Week Discovery?

The team concluded that a two week Discovery felt very short, even with prior research available to build upon. It was a mixed blessing, on one hand there was very little time for the team to get to know each other, get up to speed, undertake some critical research and bring together everything we needed to start the Alpha, on the other hand it meant we had to be very focused on what needed doing and getting the most out of the days available. In the end we concluded that we had enough in place to progress into the Alpha, but a little more breathing space would have been welcome!

Into the Alpha

The Discovery has ended and we have started our Alpha phase. This is a 12-week period which we’re splitting into short iterations/Sprints. The first iteration is one week long and is being used to setup the technical infrastructure for us to be ready to start delivering stories. After this we have a series of two week iterations, at the end of each one we plan to deliver working software (chosen from our story map) we can test with real users.

More to follow in future posts…

11 comments on “Discovery Outcomes – Electronic Questionnaire (eQ)”

Comments are closed.

Chris, great way to communicate and a great summary of the issues. Look forward to the next post

Thanks Pete!

Thank you Chris – I found this very informative.

I was particularly interested in your description of “Interviewer” users. I see that they are out of scope for now but I was of the understanding that the eQ would replace such people anyway – or have I misinterpreted ?

Hi Nick, thanks for the interest. Although we aim to encourage as many people as possible to take part in our social surveys online, our expectation is that there will still be a need for interviewer led data collection (face to face and telephone) as part of a future system.